Synopsis

The project Hybrid Reasoning for Intelligent systems is a research unit funded by the German National Science Foundation (DFG). Its aim is to combine qualitative and quantitative forms of reasoning, resulting in hybrid reasoning formalisms. My PhD was funded as part of the robotics project within the research unit. The robotics project C1 was part of both phases of the research unit.

Project Phases

Phase 1: Planning and Action Control under Uncertainty for Mobile Manipulation Tasks

This phase was about dealing with incomplete knowledge and actively reasoning about gathering information. In our final demonstration, we combined several reasoning systems: Golog was used to implement the high-level strategic reasoning to determine relevant sub-goals, a PDDL-based planning system, and geometric planners for motion and manipulation planning.

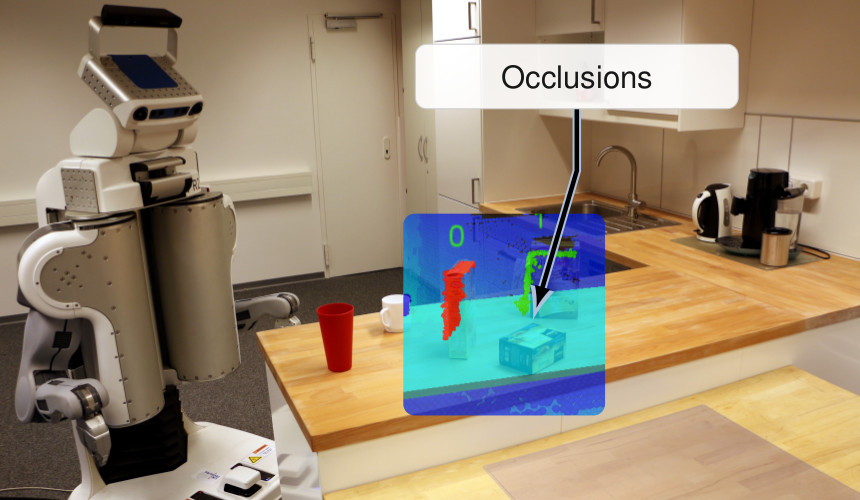

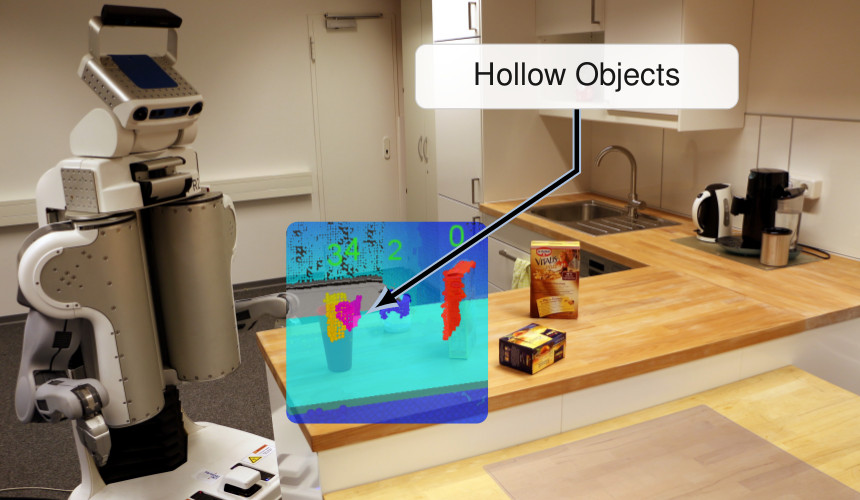

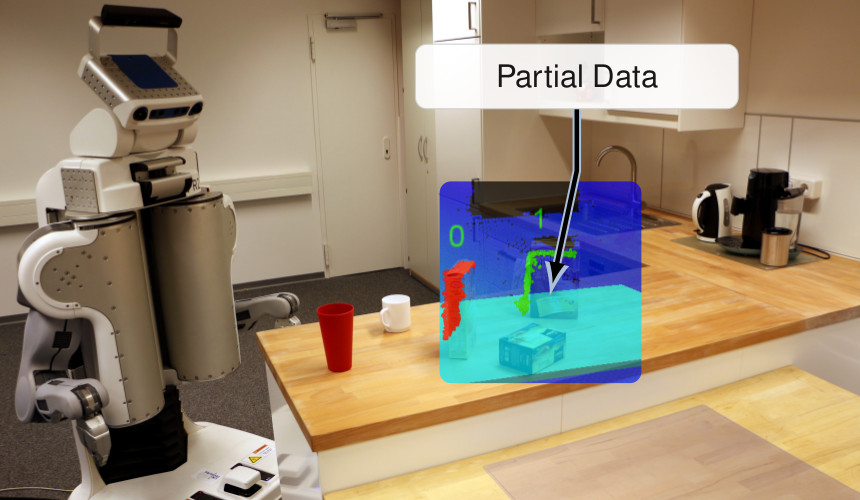

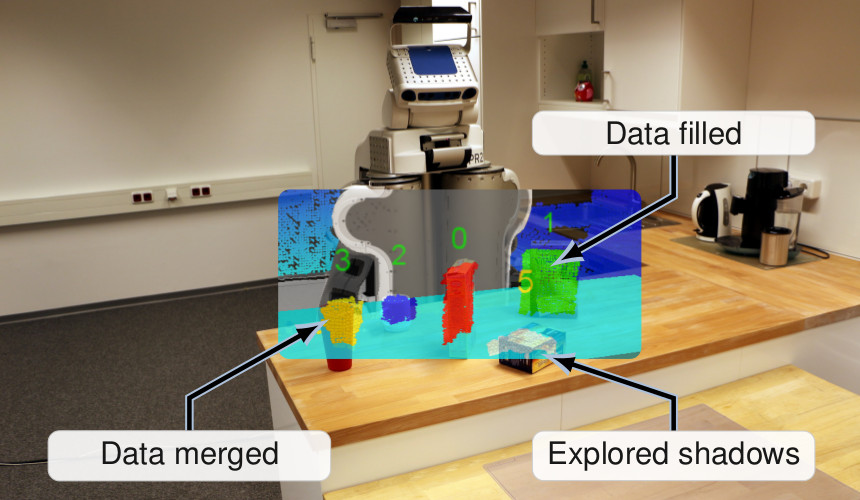

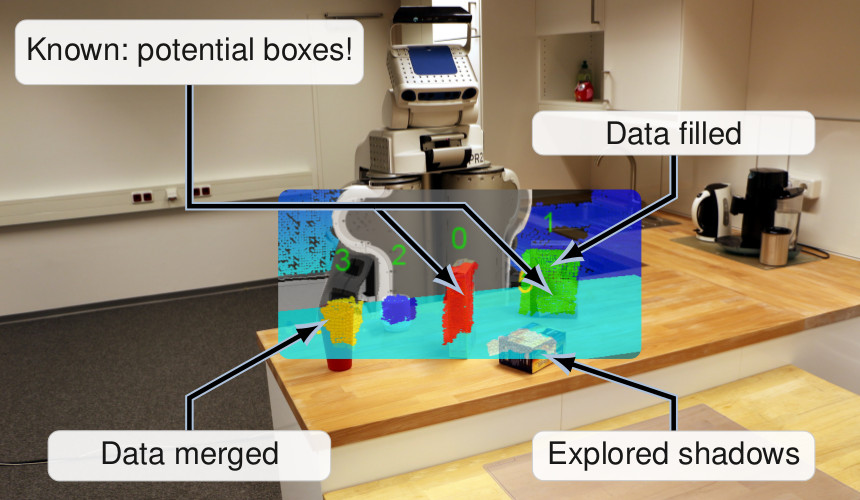

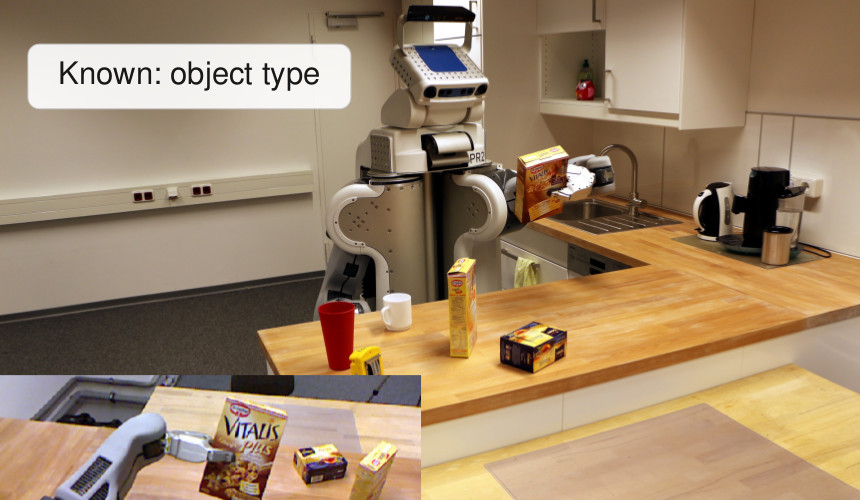

In the demo depicted below, the robot had to find a specific cereals box. But there are several problem to deal with when considering only a single perspective:

- occlusions: some objects may be behind other objects when viewing from certain positions.

- hollow objects: some objects, like cups, might appear as two, because when looking downwards, the front and back faces may be seen separately.

- partial data: for some objects, such as the cereal boxes, not all surfaces are visible from most perspectives.

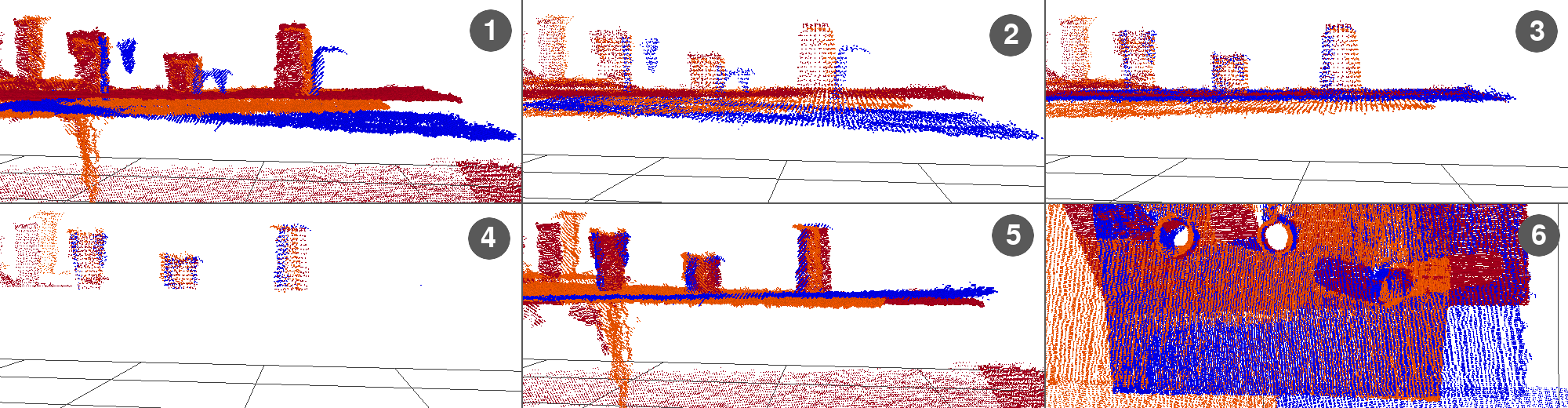

Our system would reason about whether sufficient information is available (Golog). It will then formulate sub-goals for a PDDL-based planner. If the knowledge is sufficient, the robot can go on with the primary task, e.g., picking up an object. Otherwise, a new perspective is acquired. After each such perspective, the point clouds are merged and the perception can consume the more complete scene. Below, the image shows the solution, where sensor shadows have been explored, data has been filled, and data of hollow objects has been merged.

The core of the recognition system is my robot database. The reasoning system will trigger memorizing perspectives in terms of point clouds, which can then later be retrieved, along with the specific transforms at the time of the recording. This is necessary for the merging procedure.

An earlier version of the system, where the goal was simply to identify all objects on a tabletop scene, is shown in the video below.

Phase 2: Planning and Action Control for Robots in Human Environments

While the first phase of the project focused on active perception and reasoning under uncertainty for mobile manipulation tasks, the second phase will primarily address aspects of hybrid reasoning in the context of robots operating alongside humans in domestic environments.

In particular, we will develop methods that let a robot assist humans in an unobtrusive way and be highly adaptable during task planning and execution, taking into account that some actions need to be learned on the fly and beliefs about the world may be incorrect (JETAI paper, CogRob paper). This implies among other things that acquiring user preferences will play a key role so that the robot does not have to rely on explicit instructions by the user. Furthermore, task execution and planning must account for task interruptions and possible reorderings. As a special case, we will also consider the case that the robot has to learn a new skill on the fly such as grasping an object of a new type. Such flexibility has to be supported by a new kind of planning component that can react to changes quickly. For example, the already explored search spaces could be reused when replanning and the geometrical part of the planning problem could be made more transparent so that it becomes possible to apply standard heuristic search techniques. All this will be based on using Golog as the execution engine, which has to be extended to account for interruptions (ECAI paper), and an accompanying database for recording acquired observations and beliefs (DB-based macro planning AAAI paper). Here we will, in particular, address the problem of revising incorrect beliefs. In the end, the approach will allow for implementing a robotic system that will be, for example, able to assist a host at a party by helping guests and serving snacks and drinks.

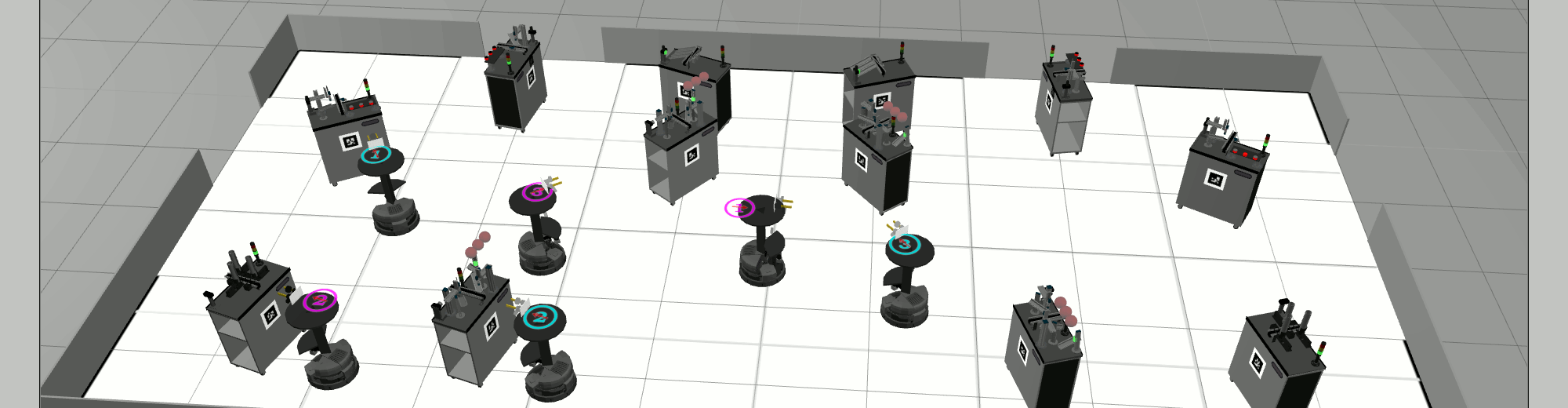

Additionally, in cooperation with partners from the sub-project A2 on Advanced Solving Technology for Dynamic and Reactive Applications of the research unit, a centralized planner based on Answer Set Programming for the RoboCup Logistics League has been developed. The planner operates in a time-bounded fashion, where a real-world execution time look-ahead is specified for which the planner determines a possible course of action. This is performed in parallel to the execution of a previous plan and the on-going actions are taken into account for planning. It is a process-oriented scheme that maximizes a given metric and thus can use non-optimal solutions, rather than only accepting solutions that reach a specified goal state. Planning occurs virtually continuously and the executive can switch to later found plans with a better metric score, as long as they are compatible with the current state. Otherwise, planning is re-started. A paper published at ICAPS 2018 describes the details of the approach.

Go to Project Site